Abstract

Objective: To identify the readability levels of measures used in assessing psychosis.

Methods: Measures were identified through a literature search. Fourteen measures met the inclusion criteria (written in English, developed in the US between 1997 and 2024, and publicly available) and were analyzed using 4 validated formulas: Gunning Fog, Simple Measure of Gobbledygook, FORCAST, and Flesch Reading Ease Score. Measures with an average readability score exceeding 6.00 were above the recommended reading level.

Results: All measures exhibited mean readability scores above the recommended sixth-grade level. The mean reading levels of the instruction and item sections were 9.08 (SD=1.44, range, 7.13–10.70) and 9.06 (SD=1.98, range, 7.08–13.79), respectively.

Conclusion: The findings indicate that measures used in assessing psychosis are written above the recommended reading levels and do not conform to suggested standards. The study highlights a significant gap in the readability of psychosis assessment measures, emphasizing the need for improvements to ensure accurate symptom assessment and effective treatment monitoring for individuals with psychotic disorders.

Prim Care Companion CNS Disord 2026;28(3):25m04077

Author affiliations are listed at the end of this article.

Self-reported measures are increasingly being used as a tool for diagnosis and monitoring response to treatment of psychosis. Currently, an estimated 0.4% of the global population experiences psychosis on a yearly basis.1 Several measures were created to aid in assessing people experiencing psychosis in both clinical and research settings. The American Medical Association (AMA) has determined that patient-facing materials should be written at a fifth-to sixth-grade reading level, below the national average reading level of eighth grade.2 Researchers have found that individuals with psychiatric conditions tend to have lower average reading levels than those without psychiatric conditions.3 This discrepancy is attributed to decreased access to standard schooling and limited speech practice, often due to lower socioeconomic status.3 Furthermore, psychotic disorders can impair cognition and attention, resulting in functional impairments that make individuals more susceptible to reading difficulties.4

Many studies in psychiatry have shown that patient-facing materials have not been written as per the AMA-recommended guidelines. A study on the readability of measures of alcohol misuse found an average reading level of ninth grade for their instructions, while the questions had an average level of eighth grade.5 Similar findings have been reported in studies examining binge-eating disorder and depression/anxiety, where questionnaires had average reading levels of eighth and ninth grades, respectively, for both the instruction and item sections.6,7 Additionally, psychiatric pamphlets intended to provide information to individuals with mental health challenges typically have an average reading level of eighth grade.3 Despite this, there are currently no data on the readability of measures designed explicitly for psychosis. This study aims to calculate the overall readability of measures used in assessing psychosis and the individual readability of the items and instructions within these measures. We hypothesize that the reading levels of these questionnaires will exceed the recommended standard.

METHODS

Systematic reviews and measures were selected from a publicly available literature search in the PsycINFO, PubMed, PubMed Central, and Google Scholar databases. We performed the literature search using the Medical Subject Headings terms “psychotic disorders,” combined with “surveys and questionnaires,” “self-report,” “self-report questionnaire,” “self-report measure,” and “self-report instrument” to identify measures.

Sixteen psychosis measures were from the systematic review by Kline and Schiffman6 and our literature search, and 14 psychosis measures met inclusion criteria and were included in this study. For all psychosis measures reviewed, the inclusion criteria were measures written in the English language, developed in the United States in the past 27 years between 1997 and 2024, and publicly available. Exclusion criteria included clinician-observed measures, clinician-standardized interviews, and measures not developed in English in the United States. The following measures were included: Community Assessment of Psychic Experiences, Composite Psychosis Risk Questionnaire, Early Recognition Inventory based on IRAOS, General Health Questionnaire, Primary Care Checklist, Prodromal Questionnaire 16 Questions (PQ-16), Prodromal Questionnaire 8 Questions, Prodromal Screen (PROD), Prodromal Questionnaire Full Version, Prime Screen Revised, Abbreviated Youth Psychosis at Risk Questionnaire, Wisconsin Schizotypal Scales, Behavior and Symptom Identification Scale Revised Version, and Psychosis-Like Symptoms.6–15

Readability was assessed via Readable software (readable.com). Each measure was analyzed via 4 readability formulas from the Readable software: Gunning Fog, Simple Measure of Gobbledygook (SMOG), FORCAST, and Flesch Reading Ease Score.16–19

The Gunning Fog score is computed with the following equation: grade level=0.4x ((average sentence length)+ (% polysyllable words)).

The SMOG score is computed with the following equation: grade level=3 + square root (polysyllable count).

The FORCAST score is computed with the following equation: grade level=20 – (monosyllable words/10).

The Flesch Reading Ease Score was computed with the following equation: score=06.835–1.015× (words/sentence) − 84.6×(syllables/words).

These validated indices were included, as they are commonly used throughout the United States to analyze text and have been used to assess the readability of measures and patient education materials in prior studies in psychiatry. These readability formulas utilize various forms of information to calculate each score, including the number of syllables per word, the length of sentences, and the degree of deviation from basic word lists. The Gunning Fog, SMOG, and FORCAST indices provided a corresponding grade level (ie, sixth grade, seventh grade) based on the measure analyzed. However, the Flesch Reading Ease Score was calculated by a score and was manually converted to a grade level by the authors for analysis. The readability for each measure was calculated by averaging scores across all 4 indices. The readability of the item and instruction sections was analyzed separately. Measures with an average readability greater than 6.00 were considered above the recommended reading level. Mean and SD readability scores for each metric across measures were computed. Analysis of the data was performed using Microsoft Excel (version 16.49).

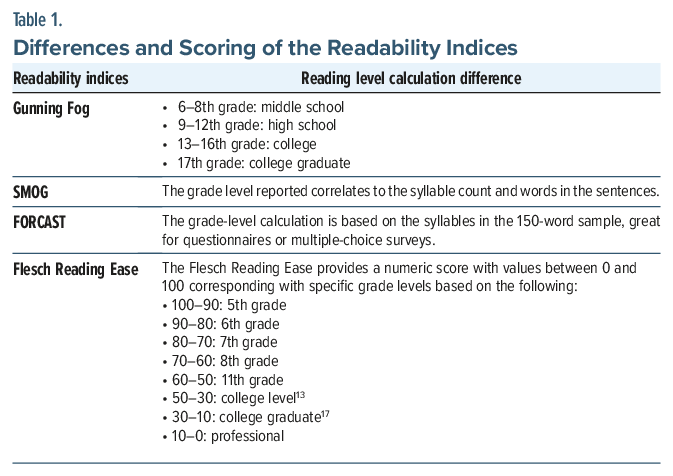

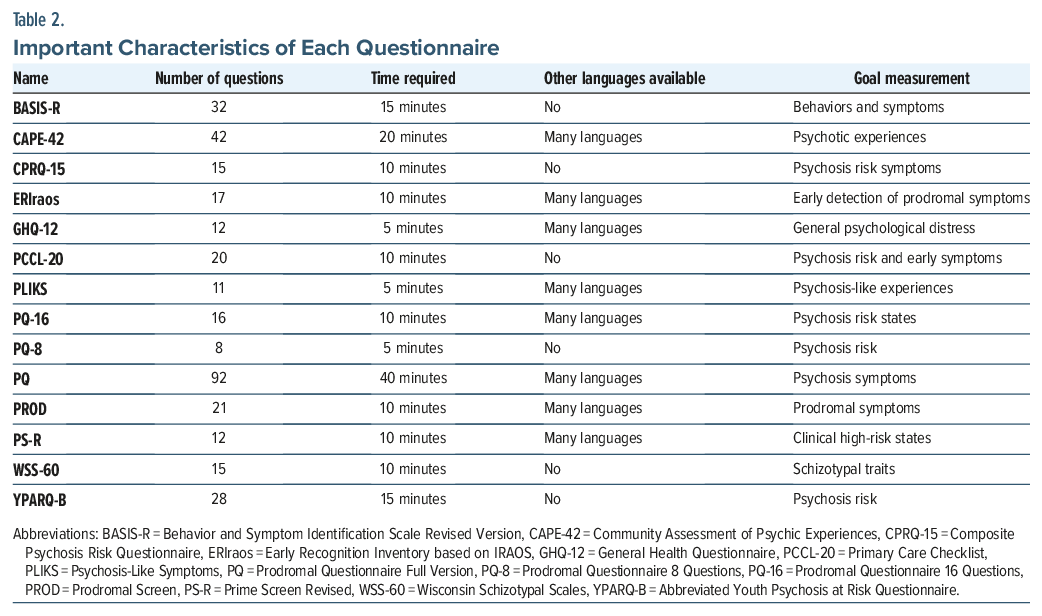

Table 1 illustrates the conversion metrics between the score and grade level for the Flesch Reading Ease Score index and highlights the differences between the readability indices.20 Table 2 illustrates important characteristics of each questionnaire.

RESULTS

All measures included in our study had a mean readability score above the recommended sixth grade level. Of the measures, all item and instruction sections were written above the recommended reading level. The mean reading level and SD of the instruction and item sections were 9.08 (SD = 1.44, range, 7.13–10.70) and 9.06 (SD = 1.98, range, 7.08–13.79), respectively. A reading comprehension level of high school or above was required in 8 of 14 measures (62%).

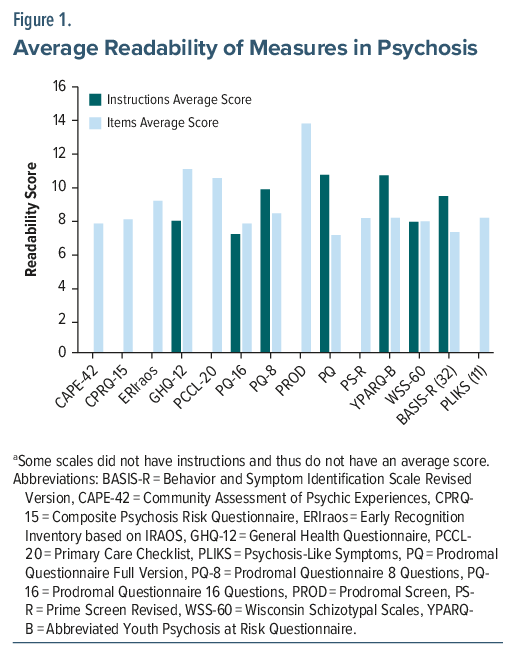

Figure 1 depicts the average readability of the instruction and item sections for each psychosis measure.21 The readability of various psychosis assessment measures was analyzed, revealing a broad range of scores. The PQ-16 measure exhibited the lowest readability, with instructions averaging 7.13 and items averaging 7.77, indicating that it is relatively easy to understand. In contrast, the PROD measure showed the highest readability scores, with an item score of 13.79, reflecting a significantly higher level of complexity that may be challenging for individuals with lower literacy levels. Across all measures, the average readability scores varied considerably, suggesting that some measures may need to be modified to ensure that they are accessible to all patients, particularly those with lower literacy levels.

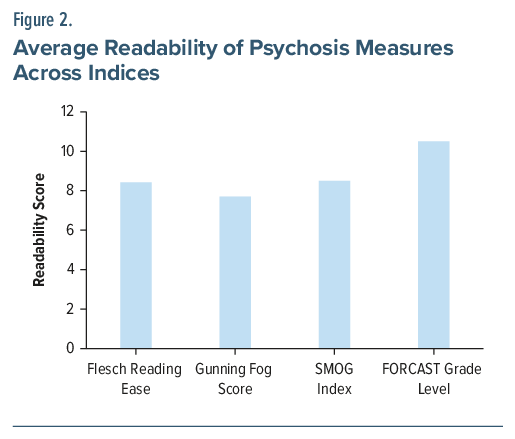

Figure 2 highlights the readability scores of each measure using Gunning Fog, SMOG, FORCAST, and Flesch Reading Ease Score indices.21 The readability of psychosis measures was assessed using 4 indices: Flesch Reading Ease, Gunning Fog, SMOG, and FORCAST. The mean of the Gunning Fog index was 7.70, the mean of the Flesch Reading Ease index was 8.42, the mean of the SMOG index was 8.50, and the mean of the FORCAST index was 10.50. The readability scores computed by the different indices correspond to reading grade levels in the United States.

DISCUSSION

Self-reported measures are particularly vital to the practice of clinical psychiatry, as they provide a method to objectively screen and monitor psychiatric symptoms in a structured and standardized manner. Behavior and conscious experience are difficult to assess with other current common diagnostic modalities such as laboratory testing and radiological imaging; therefore, self-reported measures have the opportunity to fulfill this gap in assessment and advance measurement-based care.22 Evidence shows that early detection of psychosis leads to better outcomes.23 Consequently, ensuring the readability of these standard screening tools is an important method to limit health disparities in diverse populations. With none of the psychosis questionnaires evaluated within the recommended reading level, this calls into question the validity of these measures as tools in screening and monitoring of psychosis. This is consistent with other readability studies of psychiatric questionnaires, which showed high reading level requirements and high variability.24

The National Assessment of Adult Literacy found that more than one-third of US adults have low health literacy, using the Institute of Medicine’s definition of “the degree to which individuals can obtain, process, and understand basic health information and services needed to make appropriate health decisions.”25 Studies have shown low health literacy in psychiatric populations, especially those experiencing poverty, with 1 study26 showing up to 76% of persons experiencing homelessness and receiving mental health care reading at or below a seventh-grade reading level. Additionally, literacy can impact a person’s ability to comprehend medication labels, appointment times, and mental health resources, so it is crucial for providers to be aware of any literacy challenges those under their care may be facing.26 Future studies on the role of reading literacy may elucidate further barriers in access to care and better define the value of screening tools in psychiatry.

There are many limitations to this study. The formulas used are readability estimates and do not consider other factors affecting comprehension, including font, text size, and layout. These readability formulas are based on grade levels in the United States and may not directly relate to reading levels of other English-speaking countries. Since we only analyzed questionnaires in English, this study cannot be applied to translations in other languages. Building visual and audio screening tools could help bridge the gap between cultural understanding of different mental health illnesses. With the rapid development of virtual reality (VR) and artificial intelligence technology in health care, its incorporation into psychiatric assessments may be a potential solution to issues of literacy.27

With the rapid development of VR, its incorporation into psychiatric assessments may be a potential solution to issues of literacy by allowing providers to assess in real time a person’s response to a controlled VR environment. One study evaluated paranoia in people who were at high risk for psychosis with a VR environment of a train ride that would be considered neutral by most people.27 This allowed the researchers to confirm whether people were suffering from paranoia instead of actual persecution with an objective and replicable modality. Such VR assessments can remove reliance on a person’s ability to complete a questionnaire and limit self-report bias. Future studies can explore VR incorporation into questionnaires.

In summary, our research demonstrated that the reading level of all self-reported measures in psychosis is above the recommended guidelines from the AMA. These results suggest further evidence that readability is underutilized in validity testing of self-reported measures, which usually focus on efficacy in relation to other testing modalities to diagnose and monitor psychopathology.28 Given the evidence that early detection and intervention in psychosis are crucial for better outcomes, addressing readability is essential to ensure those with lower literacy get the care they need in a timely manner. Further efforts should simplify language, integrate diverse patient perspectives, and explore innovative formats to enhance comprehension. By doing so, we can bridge the gap between clinical tools and the diverse literacy levels of those we aim to help, ultimately leading to more accurate and equitable mental health assessments.

Article Information

Published Online: May 5, 2026. https://doi.org/10.4088/PCC.25m04077

© 2026 Physicians Postgraduate Press, Inc.

Submitted: September 8, 2025; accepted January 26, 2026.

To Cite: Nickel J, Shanmugam SK, Khan A, et al. Readability of self-reported measures in psychosis. Prim Care Companion CNS Disord 2026;28(3):25m04077.

Author Affiliations: Wake Forest University School of Medicine, Winston-Salem, North Carolina (Nickel, Shanmugam, Khan); Atrium Health Wake Forest Baptist Medical Center, Winston-Salem, North Carolina (Shah, Gligorovic).

Corresponding Author: Sujeeth Krishna Shanmugam, BS, Atrium Health Wake Forest Baptist Medical Center, Winston-Salem, North Carolina ([email protected]).

Financial Disclosure: None.

Funding/Support: None.

Acknowledgments: An early version of this article was presented as a poster at the American Psychiatric Association annual meeting; May 4–8, 2024; New York, New York. Kayla Lyon, MD, assisted in revising this poster. Dr Lyon (Atrium Health Wake Forest Baptist Medical Center, Winston-Salem, North Carolina) has no conflicts of interest related to the subject of this article.

Clinical Points

- Most psychosis self-report measures are written above recommended reading levels, reducing accessibility for many patients with cognitive, educational, or symptomatic limitations.

- Readability should become a standard psychometric property evaluated during measure development alongside reliability and validity.

References (28)

- Moreno-Küstner B, Martín C, Pastor L. Prevalence of psychotic disorders and its association with methodological issues. A systematic review and meta-analyses. PLoS One. 2018;13(4):e0195687. PubMed CrossRef

- Health literacy: report of the Council on Scientific Affairs. Ad Hoc Committee on Health Literacy for the Council on Scientific Affairs. American Medical Association. JAMA; 1999.

- Sentell TL, Shumway MA. Low literacy and mental illness in a nationally representative sample. J Nerv Ment Dis. 2003;191(8):549–552. PubMed CrossRef

- Steenkamp LR, Bolhuis K, Blanken LME, et al. Psychotic experiences and future school performance in childhood: a population-based cohort study. J Child Psychol Psychiatry. 2021;62(3):357–365. PubMed CrossRef

- McHugh RK, Sugarman DE, Kaufman JS, et al. Readability of self-report alcohol misuse measures. J Stud Alcohol Drugs. 2014;75(2):328–334. PubMed CrossRef

- Kline E, Schiffman J. Psychosis risk screening: a systematic review. Schizophrenia Res. 2014;158(1–3):11–18. PubMed CrossRef

- Bukenaite A, Stochl J, Mossaheb N, et al. Usefulness of the CAPE-P15 for detecting people at ultra-high risk for psychosis: psychometric properties and cut-off values. Schizophr Res. 2017;189:69–74. PubMed CrossRef

- Ising HK, Veling W, Loewy RL, et al. The validity of the 16-item version of the Prodromal Questionnaire (PQ-16) to screen for ultra high risk of developing psychosis in the general help-seeking population. Schizophr Bull. 2012;38(6):1288–1296. PubMed CrossRef

- Loewy RL, Pearson R, Vinogradov S, et al. Psychosis risk screening with the Prodromal Questionnaire-brief version (PQ-B). Schizophr Res. 2011;129(1):42–46. PubMed CrossRef

- Heinimaa M, Salokangas RK, Ristkari T, et al. PROD-screen-a screen for prodromal symptoms of psychosis. Int J Methods Psychiatr Res. 2003;12(2):92–104. PubMed CrossRef

- Kobayashi H, Nemoto T, Koshikawa H, et al. A self-reported instrument for prodromal symptoms of psychosis: testing the clinical validity of the PRIME Screen-Revised (PS-R) in a Japanese population. Schizophr Res. 2008;106(2–3):356–362. PubMed CrossRef

- Fonseca-Pedrero E, Ortuño-Sierra J, Chocarro E, et al. Psychosis risk screening: validation of the Youth Psychosis At-Risk Questionnaire - brief in a community-derived sample of adolescents. Int J Methods Psychiatr Res. 2017;26(4):e1543. PubMed CrossRef

- Fonseca-Pedrero E, Paino M, Ortuño-Sierra J, et al. Dimensionality of the Wisconsin Schizotypy Scales-brief forms in college students. ScientificWorldJournal. 2013;2013:625247. PubMed CrossRef

- Eisen SV, Normand SL, Belanger AJ, et al. The revised Behavior and Symptom Identification Scale (BASIS-R): reliability and validity. Med Care. 2004;42(12):1230–1241. PubMed CrossRef

- Zammit S, Horwood J, Thompson A, et al. Investigating if psychosis-like symptoms (PLIKS) are associated with family history of schizophrenia or paternal age in the ALSPAC birth cohort. Schizophr Res. 2008;104(1-3):279–286. PubMed CrossRef

- Lee SE, Farzal Z, Kimple AJ, et al. Readability of patient-reported outcome measures for chronic rhinosinusitis and skull base diseases. Laryngoscope. 2020;130(10):2305–2310. PubMed CrossRef

- Dorismond C, Farzal Z, Thompson NJ, et al. Readability analysis of pediatric otolaryngology patient-reported outcome measures. Int J Pediatr Otorhinolaryngol. 2021;140:8–11. PubMed CrossRef

- Lee SE, Farzal Z, Ebert CS, et al. Readability of patient-reported outcome measures for head and neck oncology. Laryngoscope. 2020;130(12):2839–2842. PubMed CrossRef

- Cherla D, Sanghvi S, Choudry O, et al. Readability assessment of internet-based patient education materials related to endoscopic sinus surgery. Laryngoscope. 2012;122(8):1649–1654. PubMed CrossRef

- Rao SJ, Nickel JC, Kiell EP, et al. Readability of commonly used patient-reported outcome measures in laryngology. Laryngoscope. 2022;132(5):1069–1074. PubMed CrossRef

- Readability score | Readability test | Reading level calculator | Readable [Internet]. [cited 2024 Aug 6]. https://readable.com/ PubMedCrossRef

- Agarwal N, Port JD, Bazzocchi M, et al. Update on the use of MR for assessment and diagnosis of psychiatric diseases. Radiology. 2010;255(1):23–41. PubMed CrossRef

- Anderson KK, Norman R, MacDougall A, et al. Effectiveness of early psychosis intervention: comparison of service users and nonusers in population-based health administrative data. Am J Psychiatry. 2018;175(5):443–452. PubMed CrossRef

- McHugh RK, Behar E. Readability of self-report measures of depression and anxiety. J Consult Clin Psychol. 2009;77(6):1100–1112. PubMed CrossRef

- Mark Kutner EGYJCP. The Health Literacy of America’s Adults: Results from the 2003 National Assessment of Adult Literacy [Internet]. 2006. https://nces.ed.gov/pubsearch/pubsinfo.asp?pubid=2006483

- Christensen RC, Grace GD. The prevalence of low literacy in an indigent psychiatric population. Psychiatr Serv. 1999;50(2):262–263. PubMed CrossRef

- Geraets CNW, Wallinius M, Sygel K. Use of virtual reality in psychiatric diagnostic assessments: a systematic review. Front Psychiatry. 2022;13:828410. PubMed CrossRef

- Dentch GE, O’Farrell TJ, Cutter HSG. Readability of marital assessment measures used by behavioral marriage therapists. J Consult Clin Psychol. 1980;48(6):790–792. PubMed

Enjoy this premium PDF as part of your membership benefits!